When you create a new pipeline, Integrate.io ELT & CDC performs an initial sync to load the full historical data from your source tables into the destination. Once the initial sync completes, the pipeline switches to continuous sync mode and begins capturing ongoing changes.Documentation Index

Fetch the complete documentation index at: https://www.integrate.io/docs/llms.txt

Use this file to discover all available pages before exploring further.

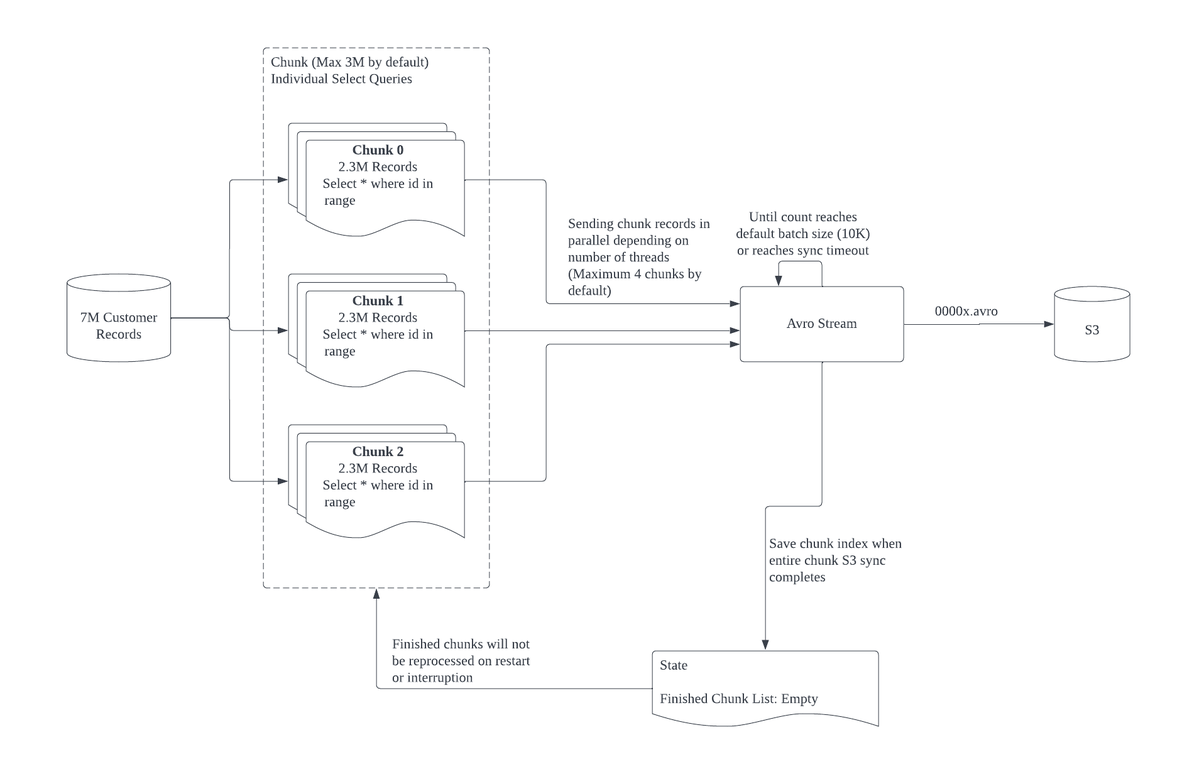

How chunking works

During initial sync, Integrate.io counts the number of records in a table and divides them into chunks of roughly equal size. Each chunk corresponds to aSELECT statement with a primary key range. Multiple chunks are processed in parallel, which allows large tables to sync faster than a single sequential read.

Chunking is supported on tables with numeric primary keys (integer, big integer, medium integer). Tables with non-numeric primary keys are synced without chunking.

Data flow stages

- Source read. Each chunk reads rows from the source database using a range query.

- Avro stream. Row data from all active chunks is combined into batches in the Avro format.

- S3 staging. When a batch reaches the maximum default size or a sync timeout occurs, the batch is written to S3 as an Avro file.

- Destination load. Staged Avro files are loaded into the destination warehouse (Redshift, Snowflake, BigQuery, or S3).