Connection Setup

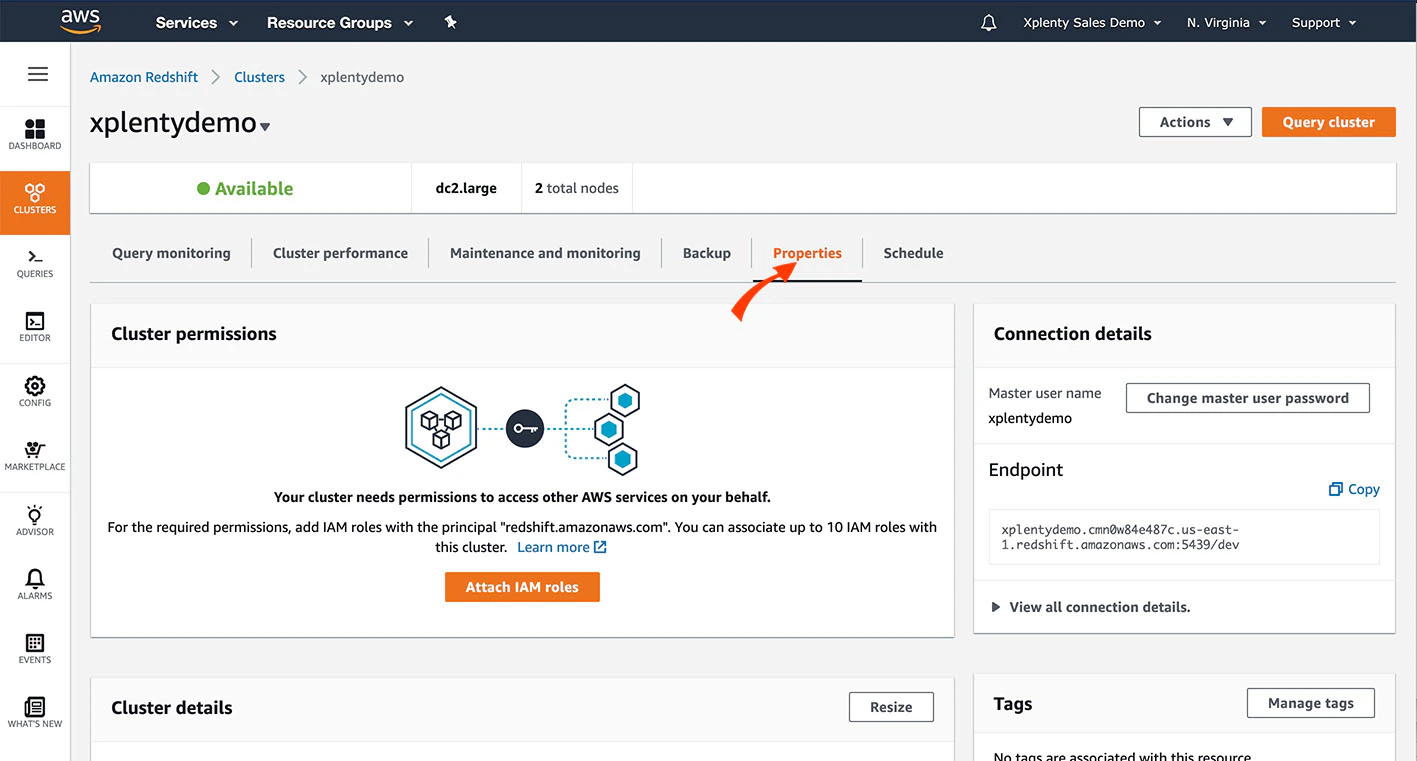

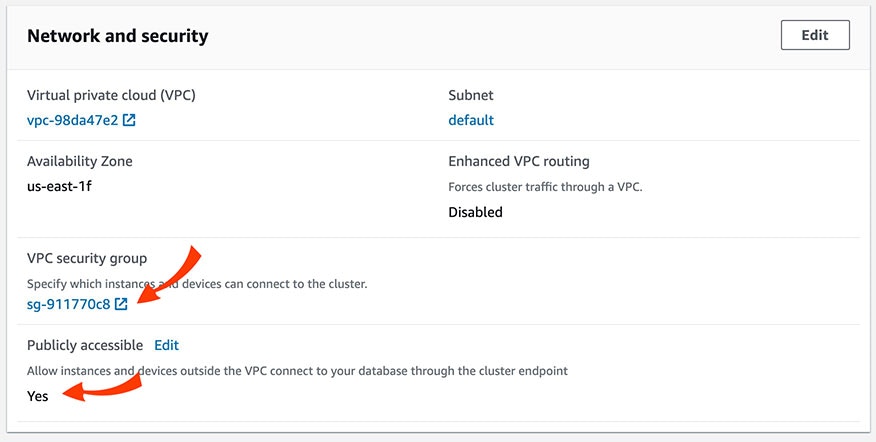

In Redshift console

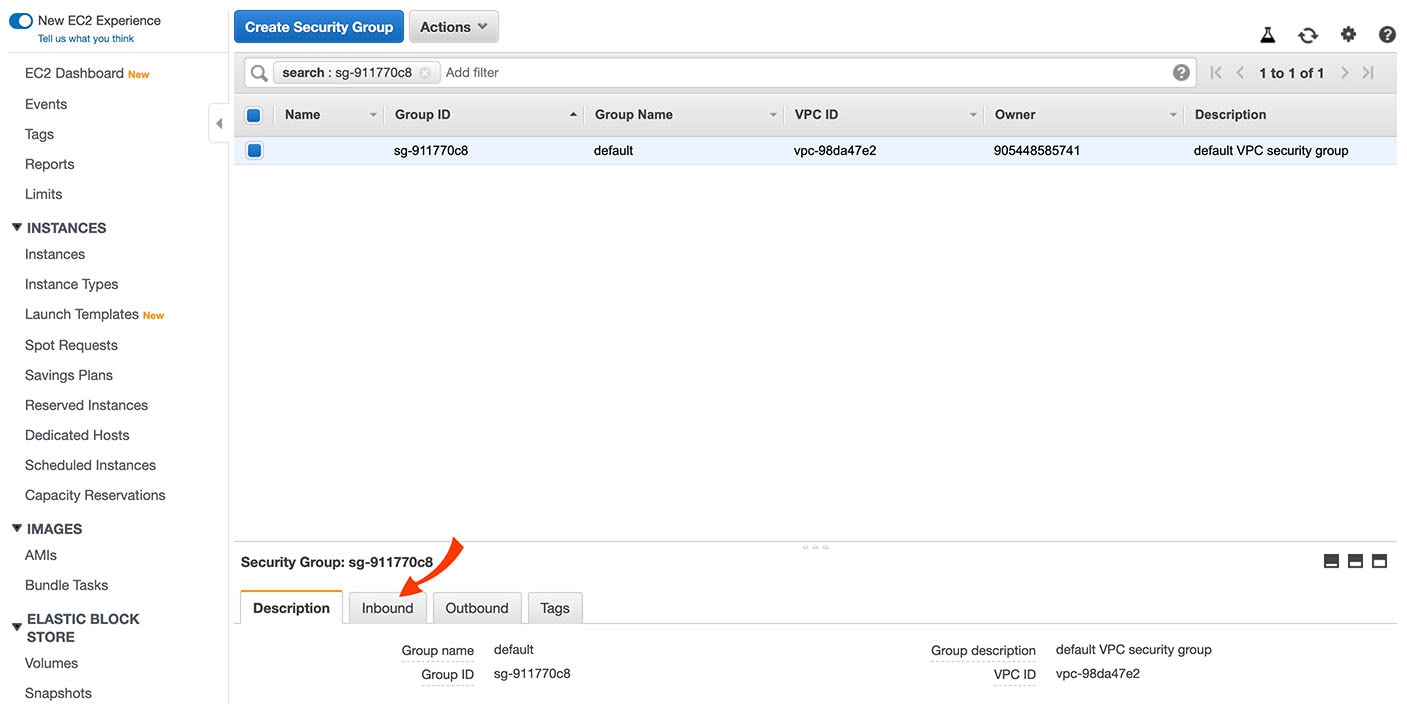

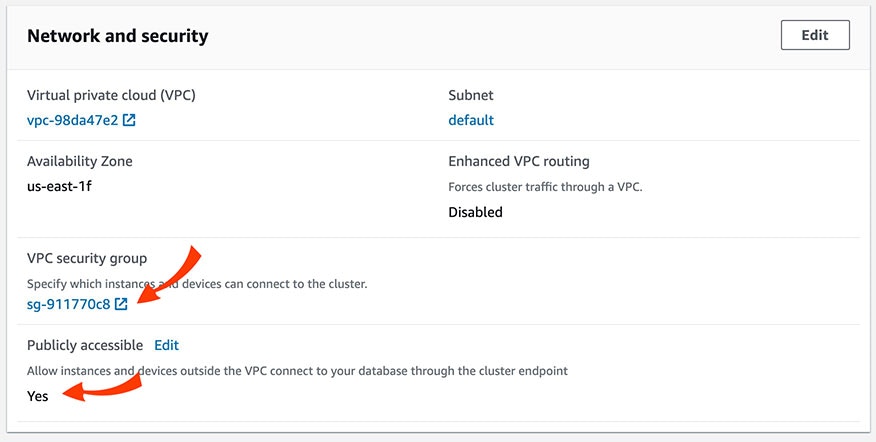

Scroll down to the Network and Security section. Make sure that the cluster is set with the value for Publicly Accessible to Yes. Then, click the VPC Security Group to verify and/or modify the security rules.

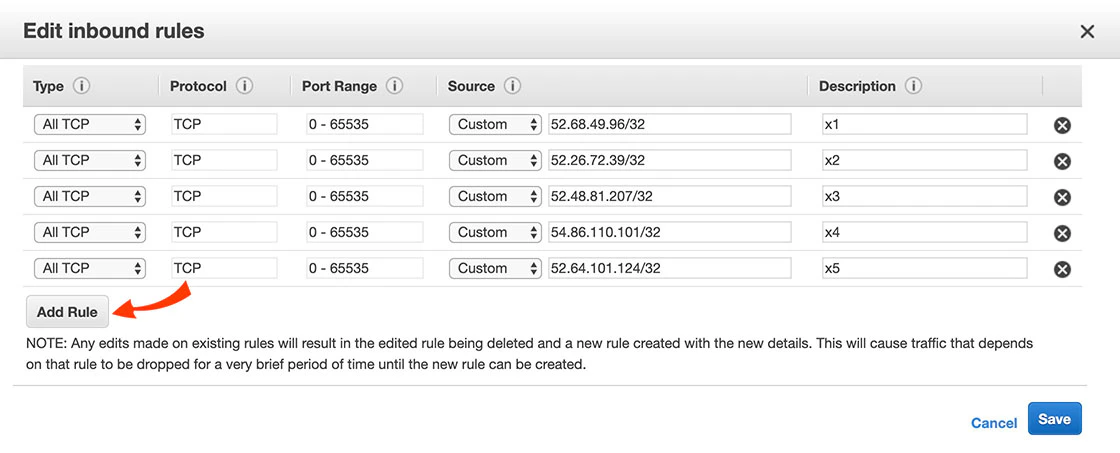

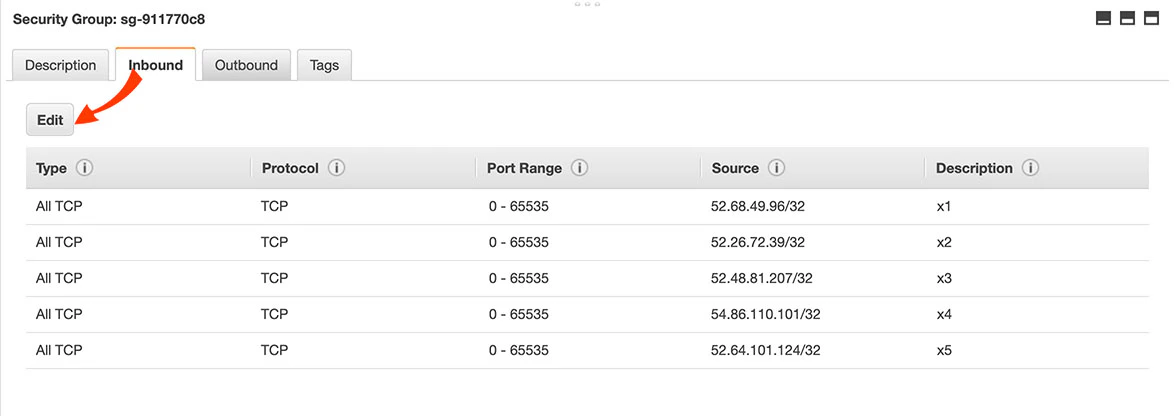

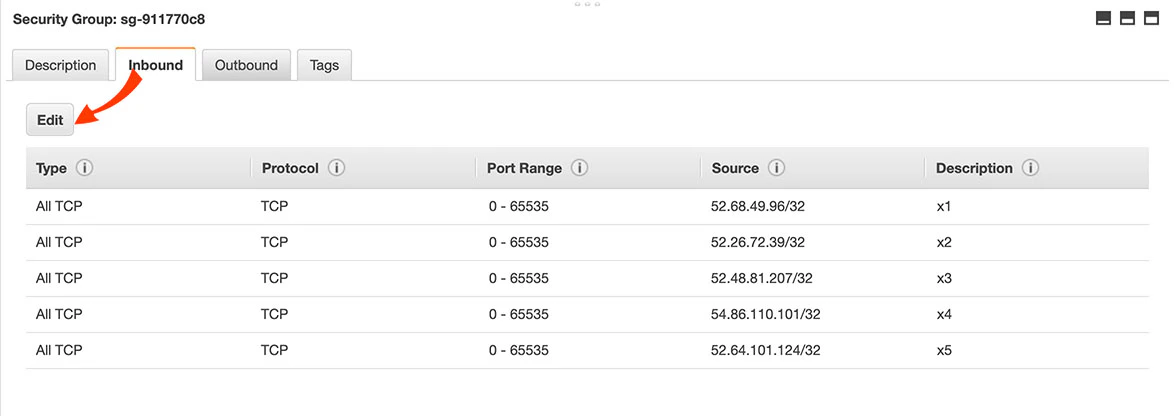

There should be rules for the IP addresses listed here. If those rules need to be altered or don’t exist, click Edit.

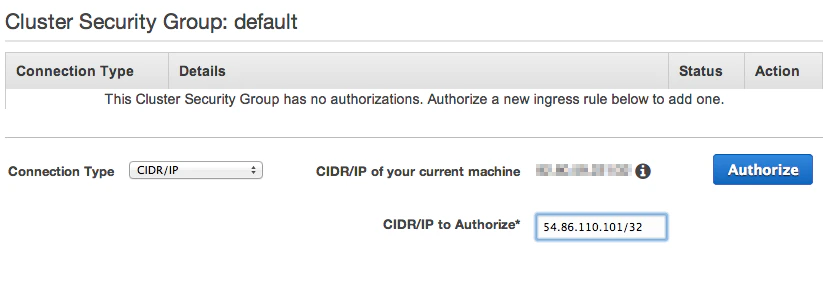

To verify or modify the security rules of an instance on EC2-Classic (without VPC)

In your Redshift Cluster Security Group, modify a rule or add a new rule for each IP address listed here:

- In the Connection Type dropbox, choose CIDR/IP.

- In the CIDR/IP to Authorize field, enter the IP addresses from this list.

Create a Redshift user

- Create a Redshift user.

- Grant it the following permissions:

- If you intend to only append data into a table, give the user minimal permissions required to execute the COPY command.

- If you intend to merge data into a table, give the user minimal permissions required to execute the COPY command, create a table, and insert and update to your target tables.

- Note that truncate requires Integrate.io ETL to either be the owner of the target table or have superuser access.

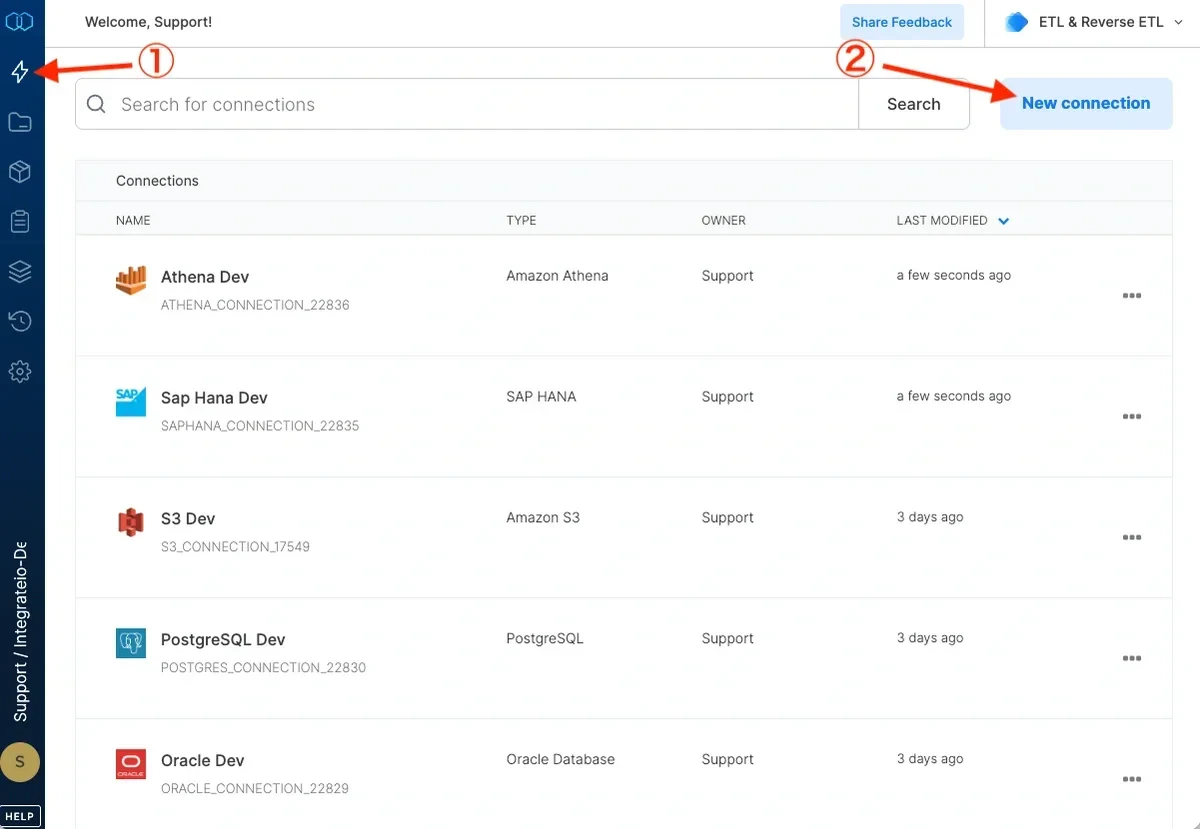

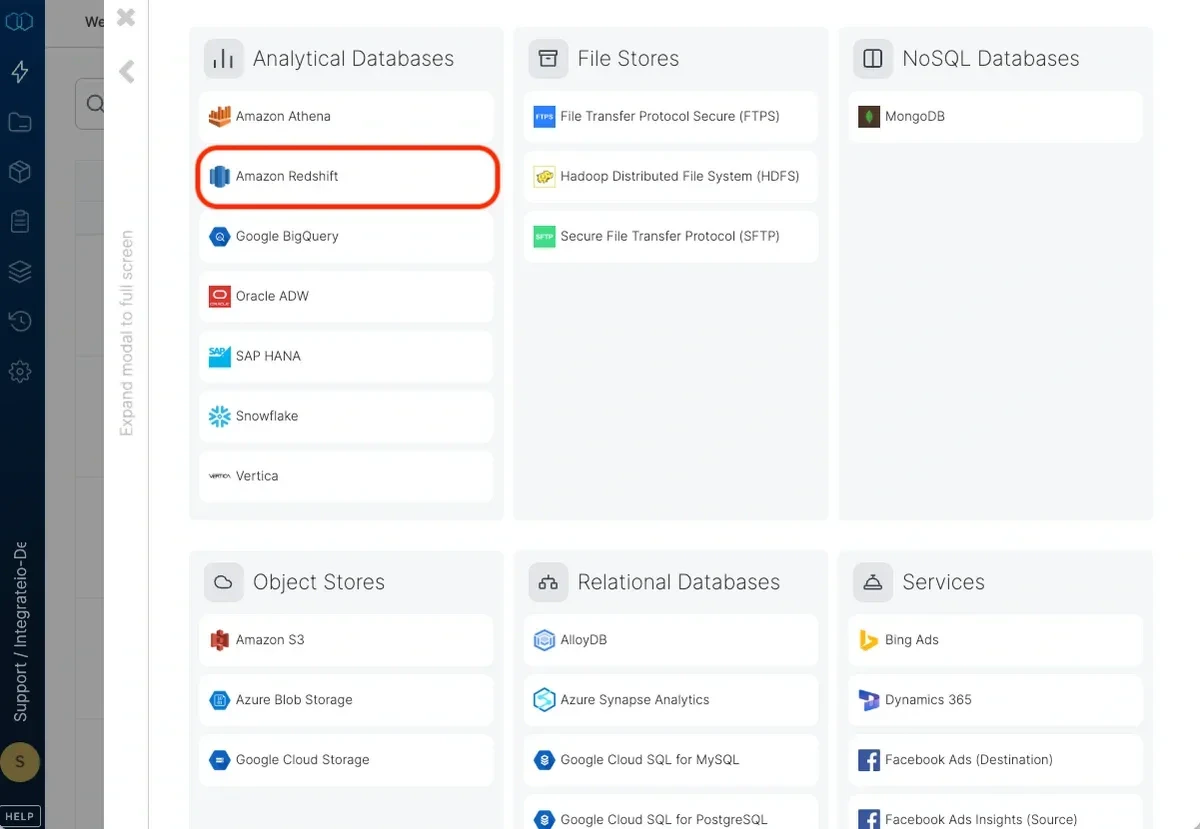

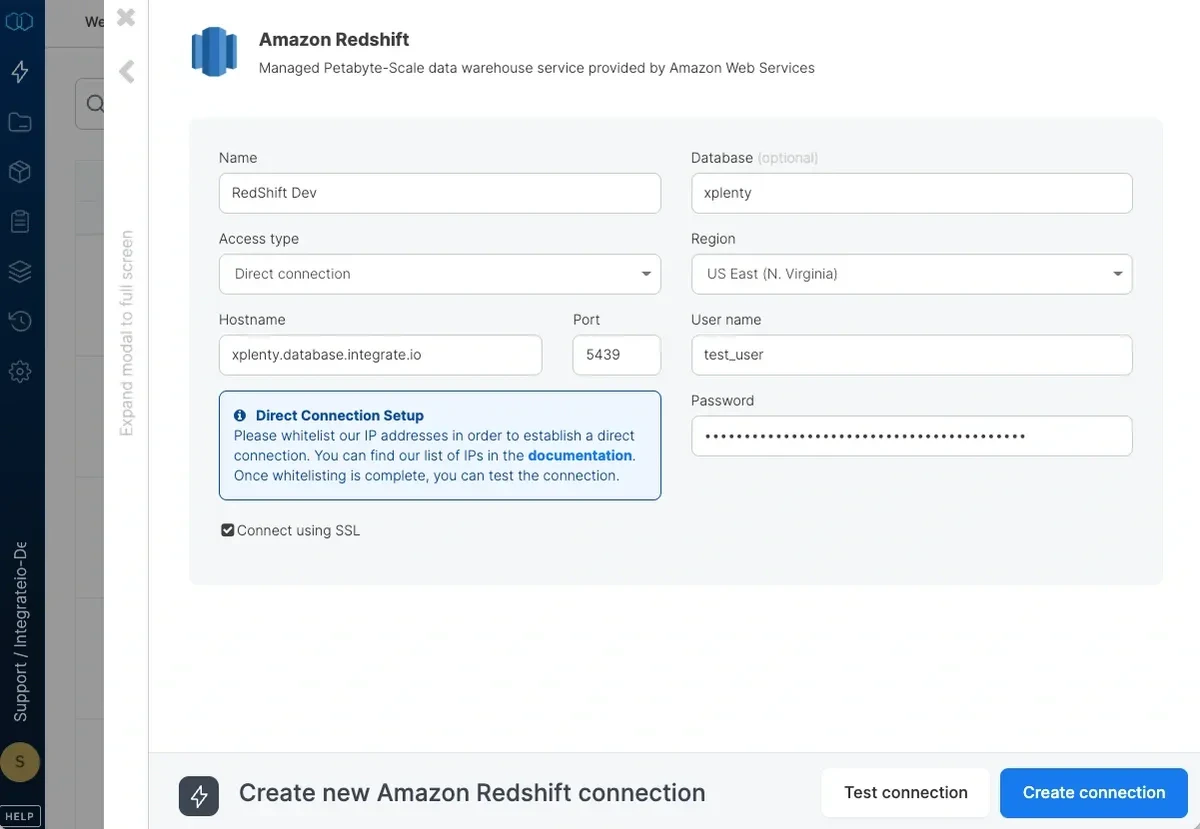

To define a connection in Integrate.io ETL to Amazon Redshift

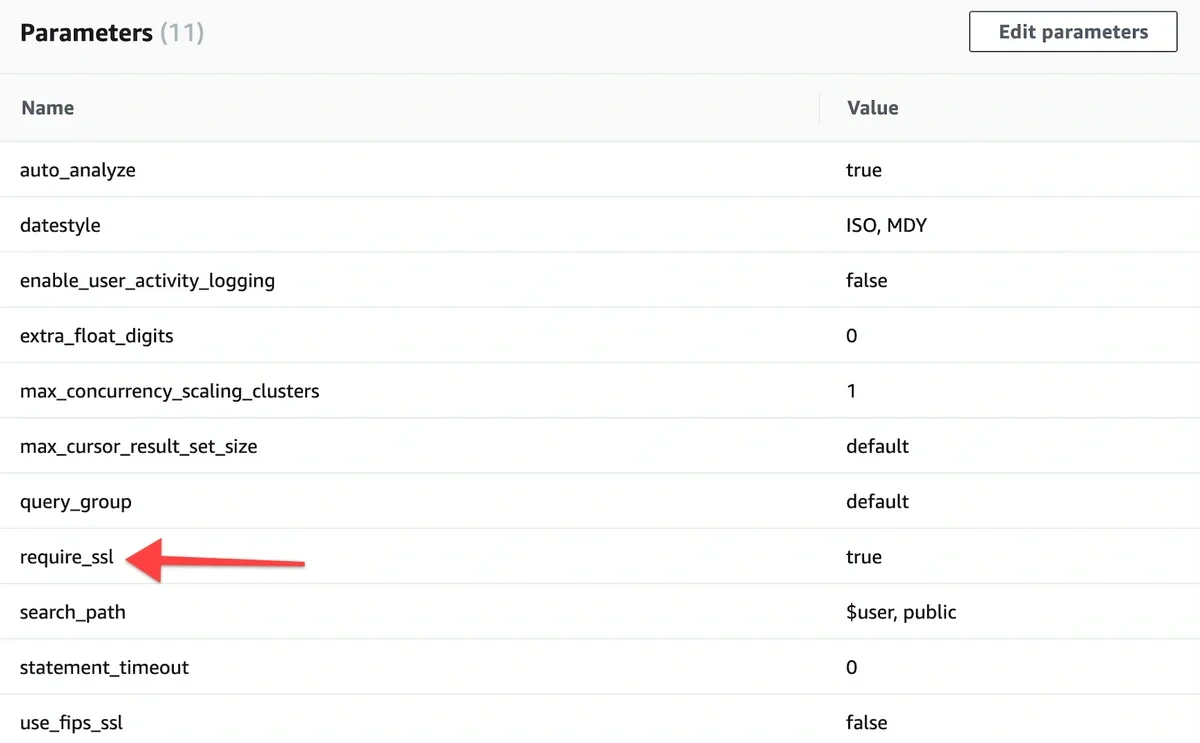

If you allow direct access from Integrate.io ETL’s IP addresses, enter the hostname and port. If direct access is not allowed, read more about setting a tunnel connection here.

Enter the default database to use. If you leave it empty, the user’s default database will be used.

Set the region to the AWS region in which the Redshift cluster was created. If the region requires AWS Signature v4 (see list here) you may need our support team’s help with allowing Integrate.io ETL access to read from this Amazon Redshift connection.

Click Test connection. If the credentials are correct, a message that the cloud storage connection test was successful appears.

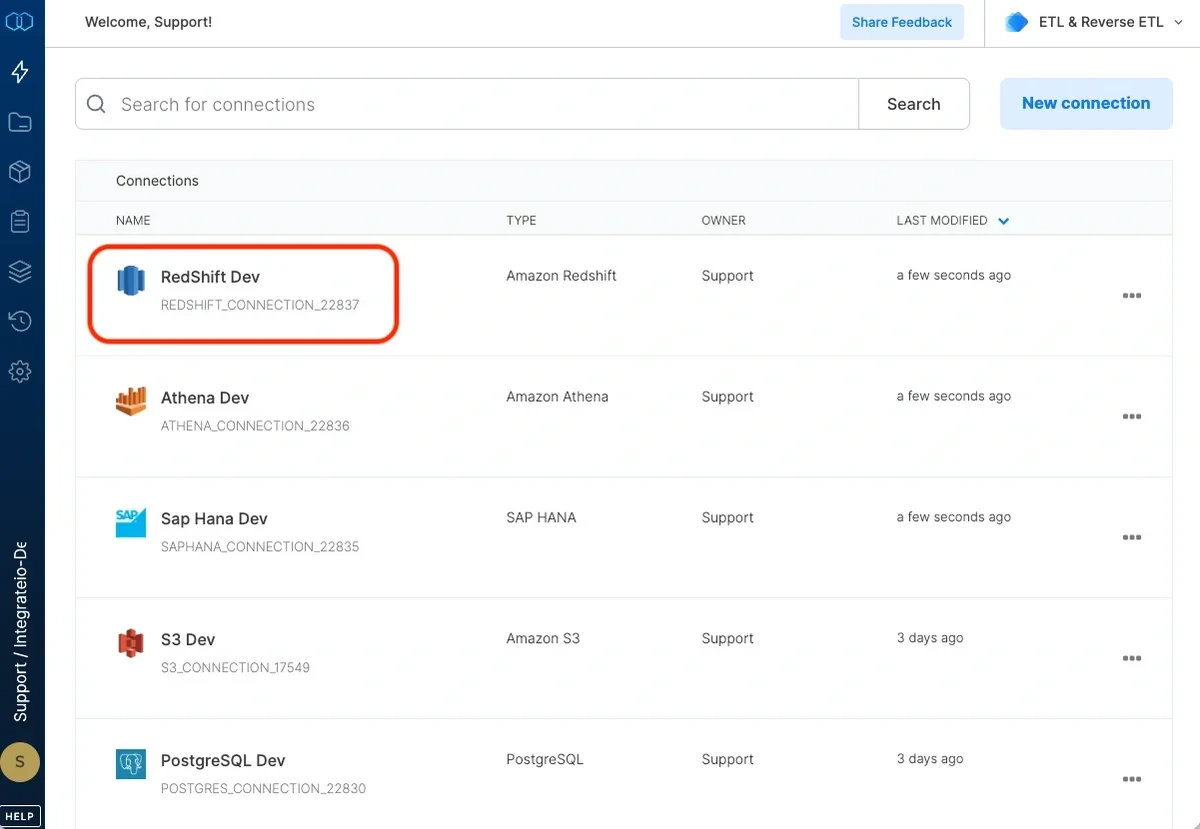

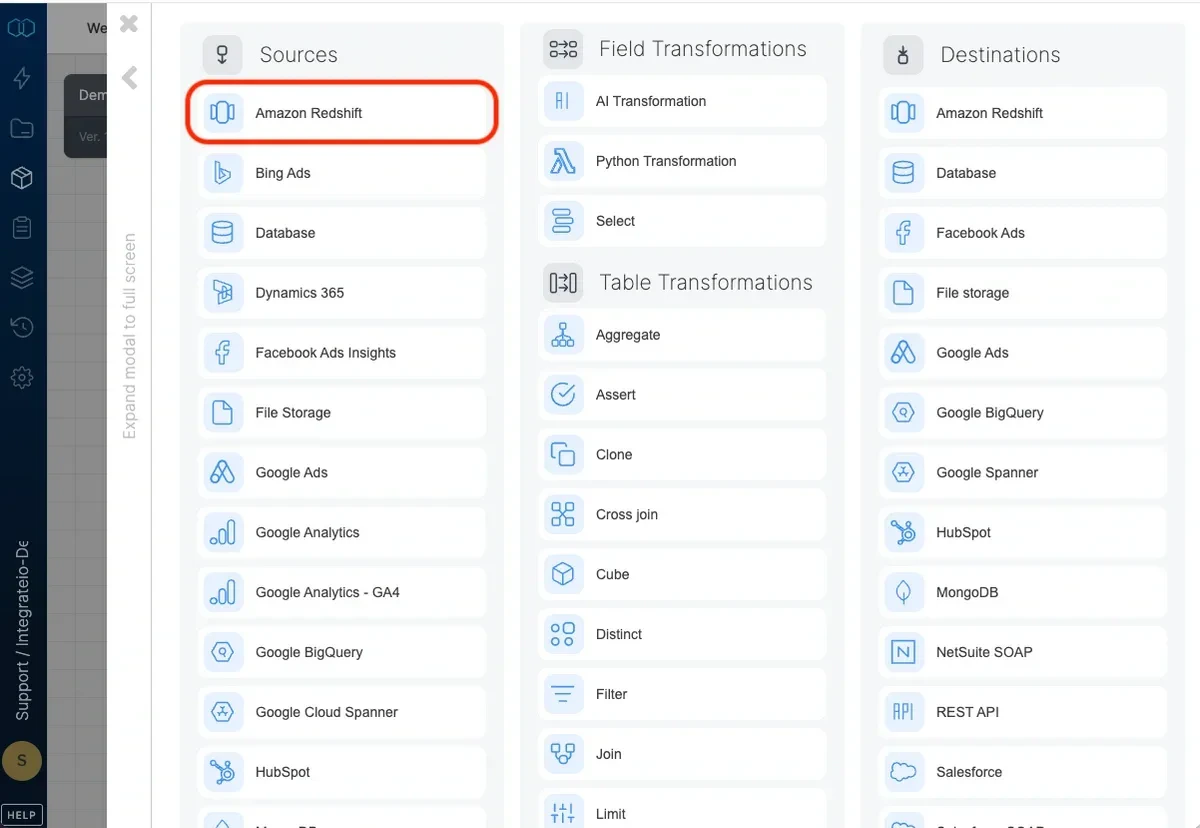

Connection

Select an existing Amazon Redshift connection or create a new one (for more information, see Allowing Integrate.io ETL access to my Redshift cluster.)Source Properties

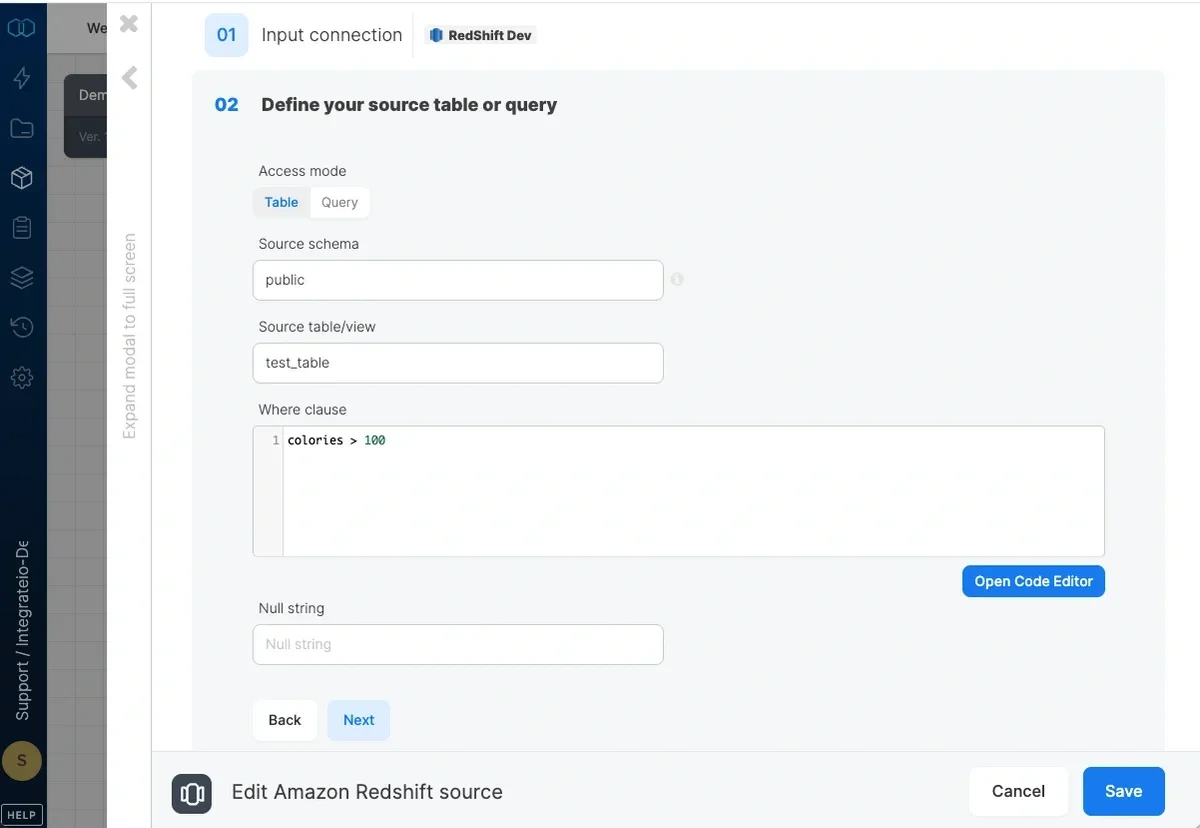

- Access mode - select table to extract an entire table/view or query to execute a query.

- Source schema - the source table’s schema. If empty, the default schema is used.

- Source table/view - the table or view name from which the data will be imported.

- where clause - optional. You can add predicates clauses to the WHERE clause as part of the SQL query that is built in order to get the data from the database. Make sure to skip the keyword WHERE.

Good prod_category = 1 AND prod_color = 'red'Bad WHERE prod_category = 1 AND prod_color = 'red' - Query - type in a SQL query. Make sure to name all columns uniquely.

- Null string - NULL values in string columns will be replaced with the string specified here. By default NULL values will appear like empty strings.

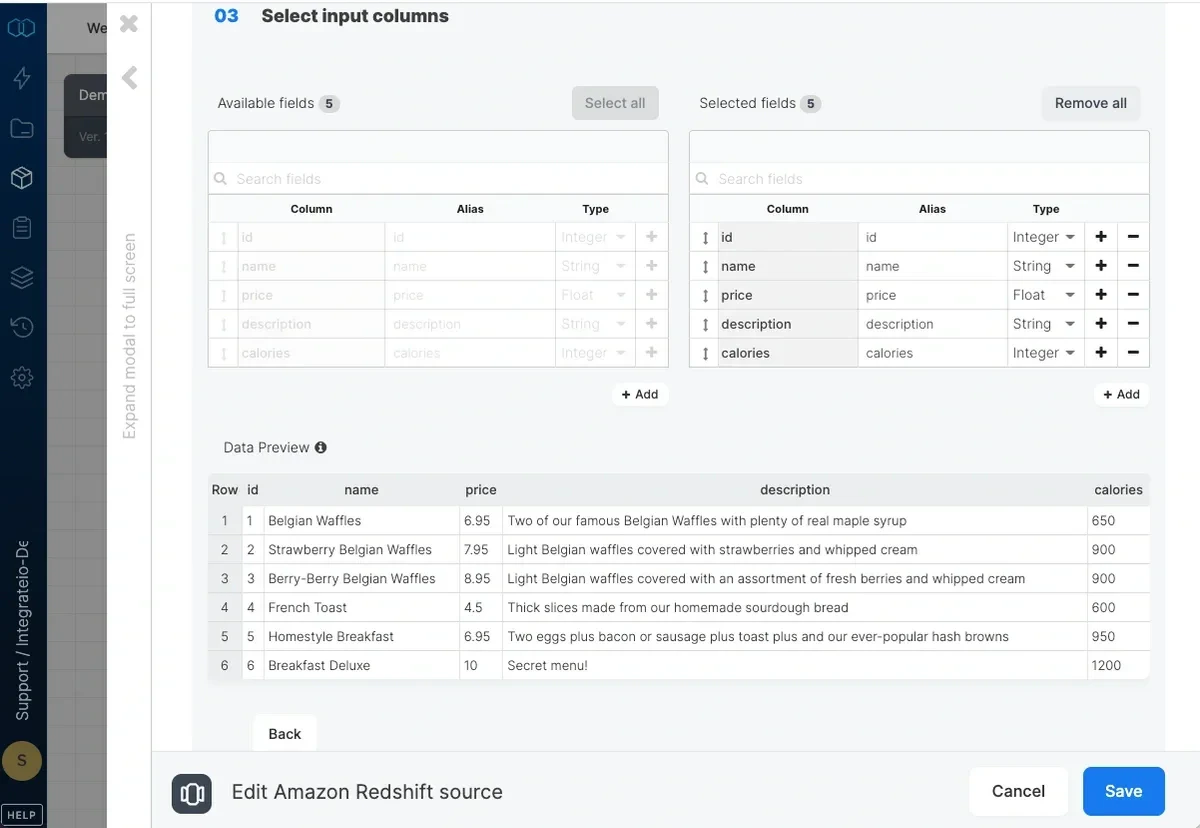

Source Schema

| Amazon Redshift | Integrate.io ETL |

|---|---|

| varchar, nvarchar, text | String |

| smallint, int | Integer |

| bigint | Long |

| decimal, real | Float |

| double precision | Double |

| timestamp, date | DateTime |