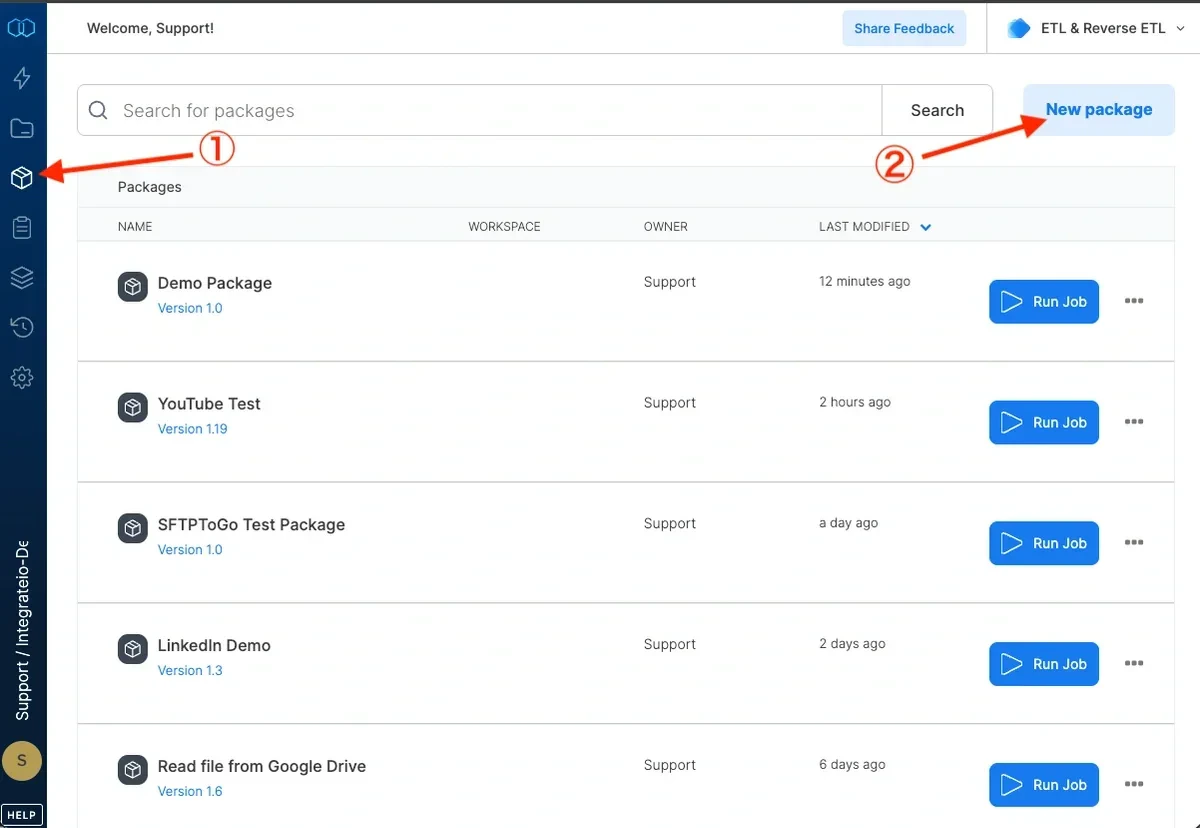

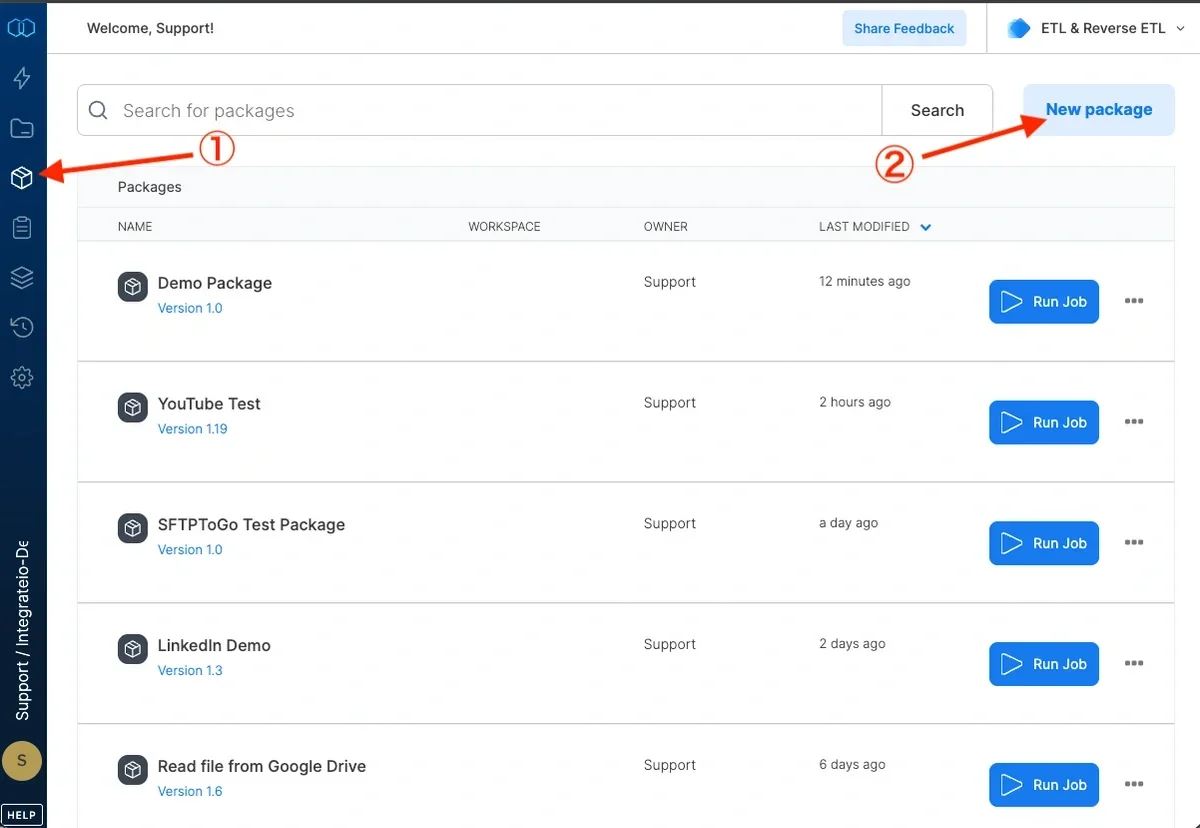

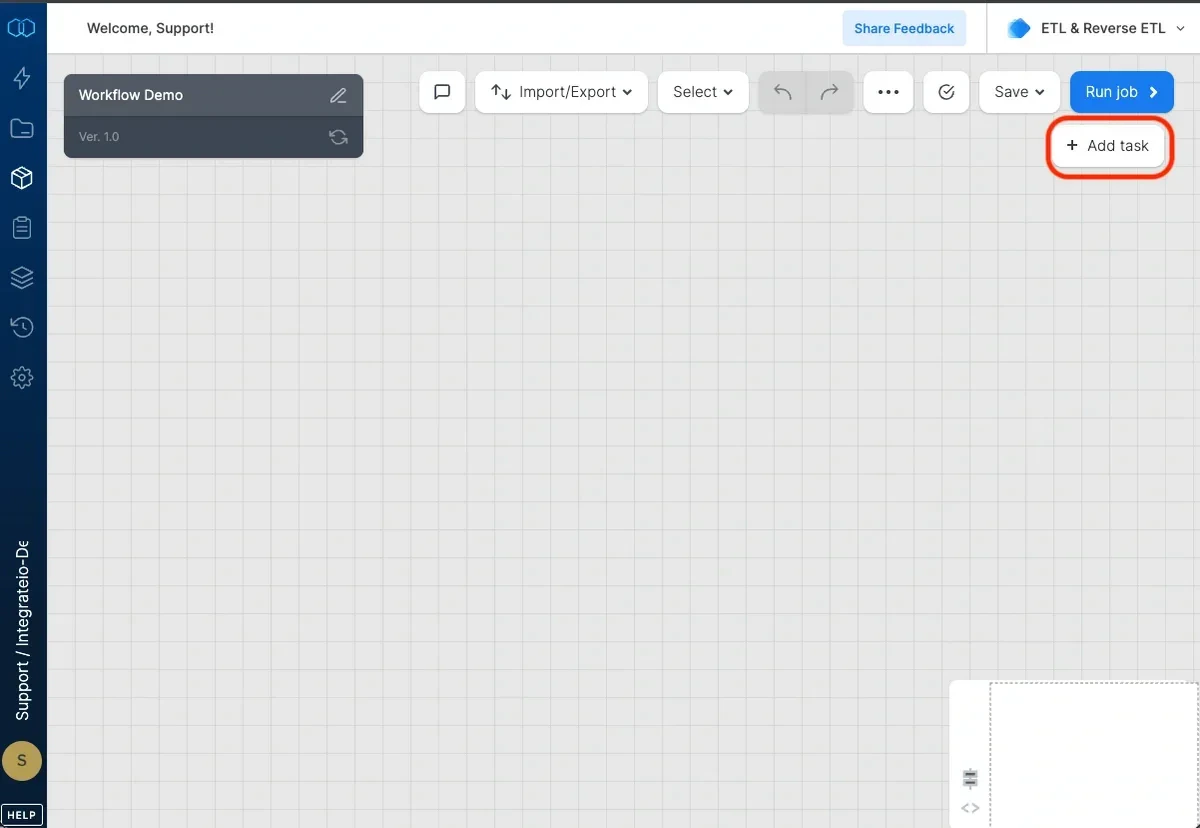

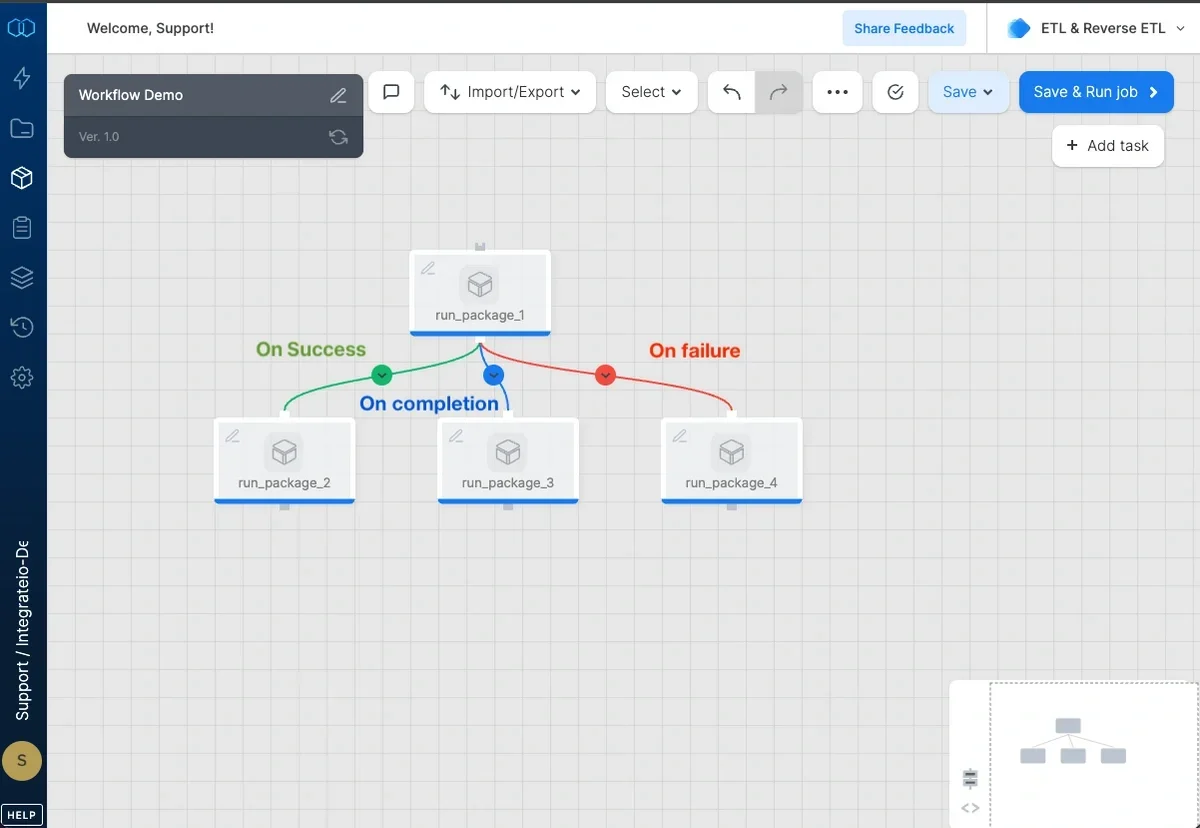

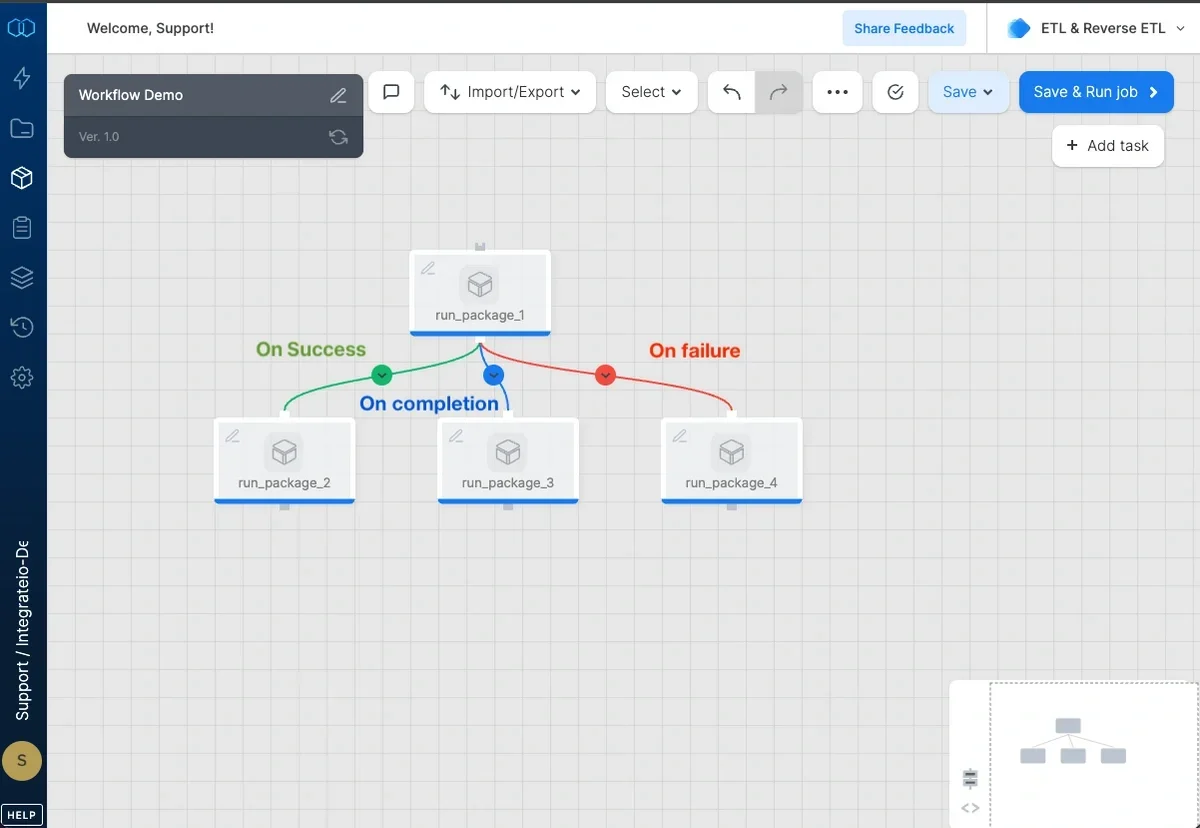

To create a workflow:

Connect tasks to create sequence of task execution. Click the connect icon on the dotted line to set the execution condition:

- On success (default) - task will be executed once the preceding task was executed successfully

- On failure - task will be executed once the preceding task execution failed

- On completion - task will be executed once the preceding task completed, regardless to the completion status (failed/succeeded)

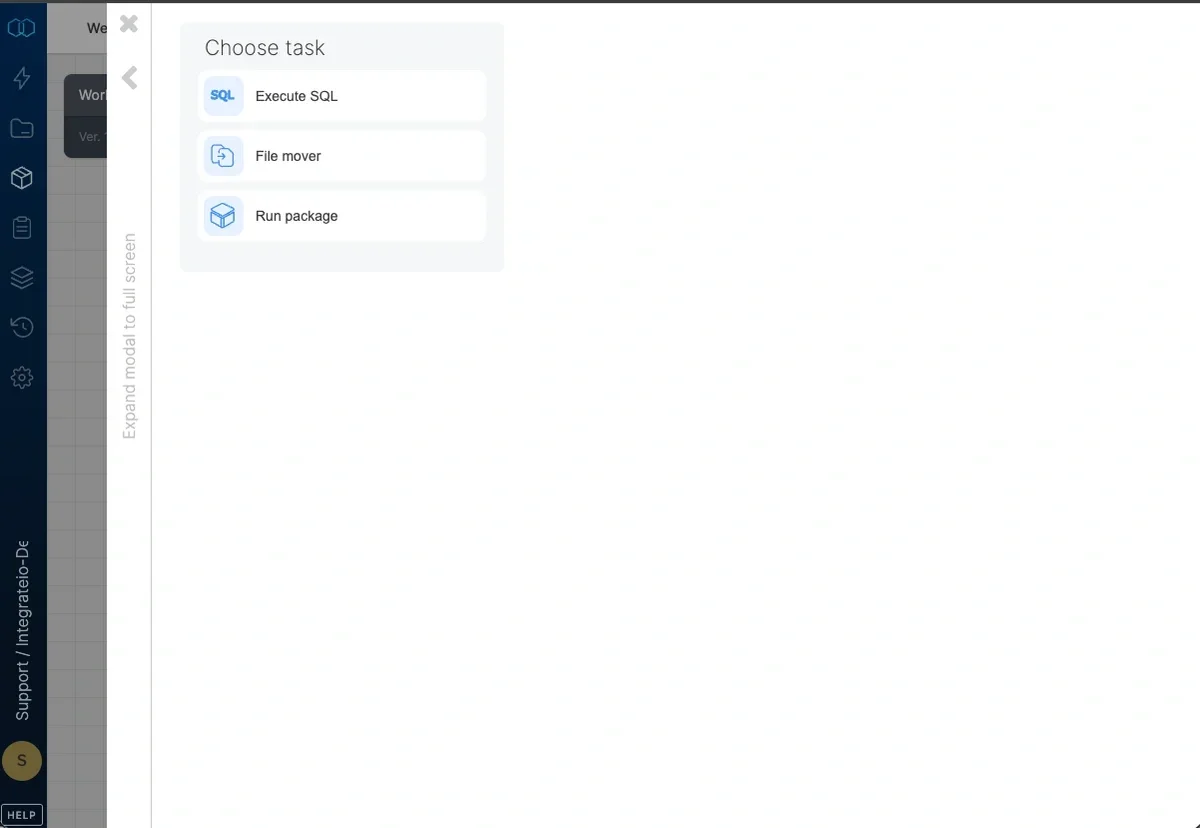

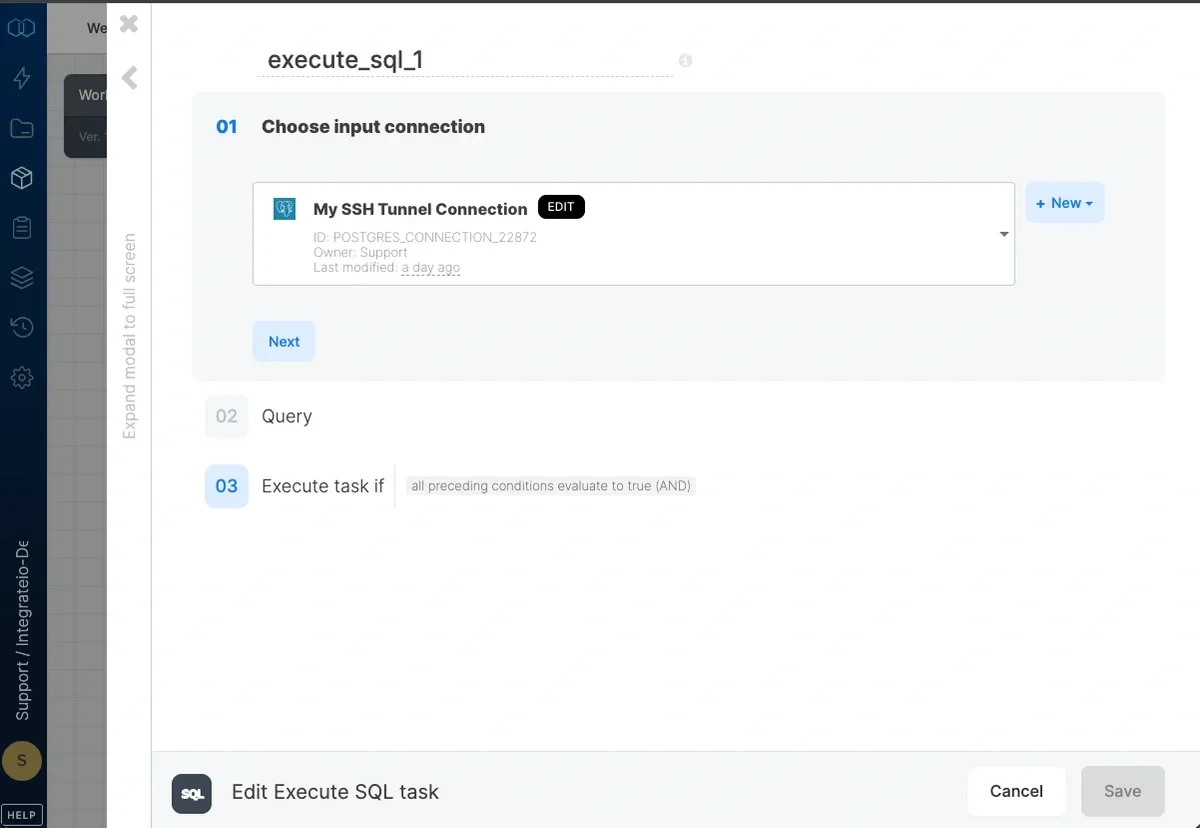

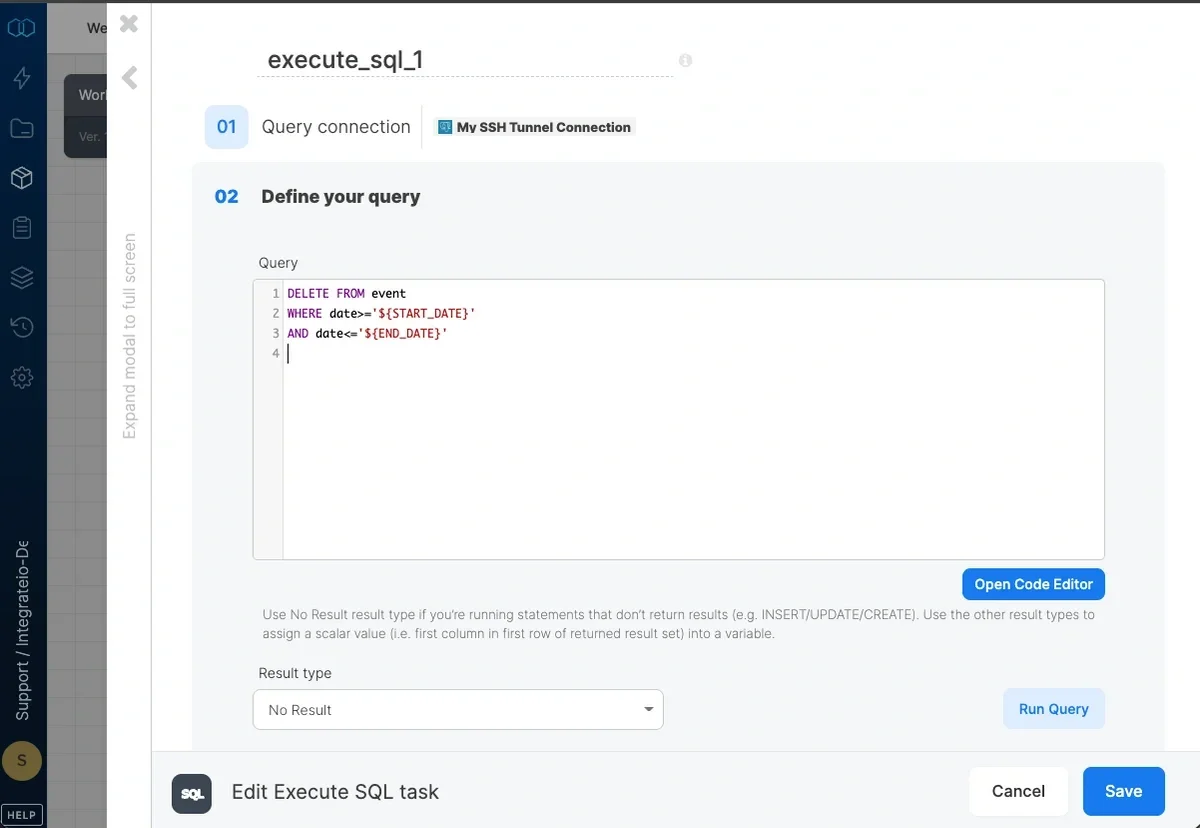

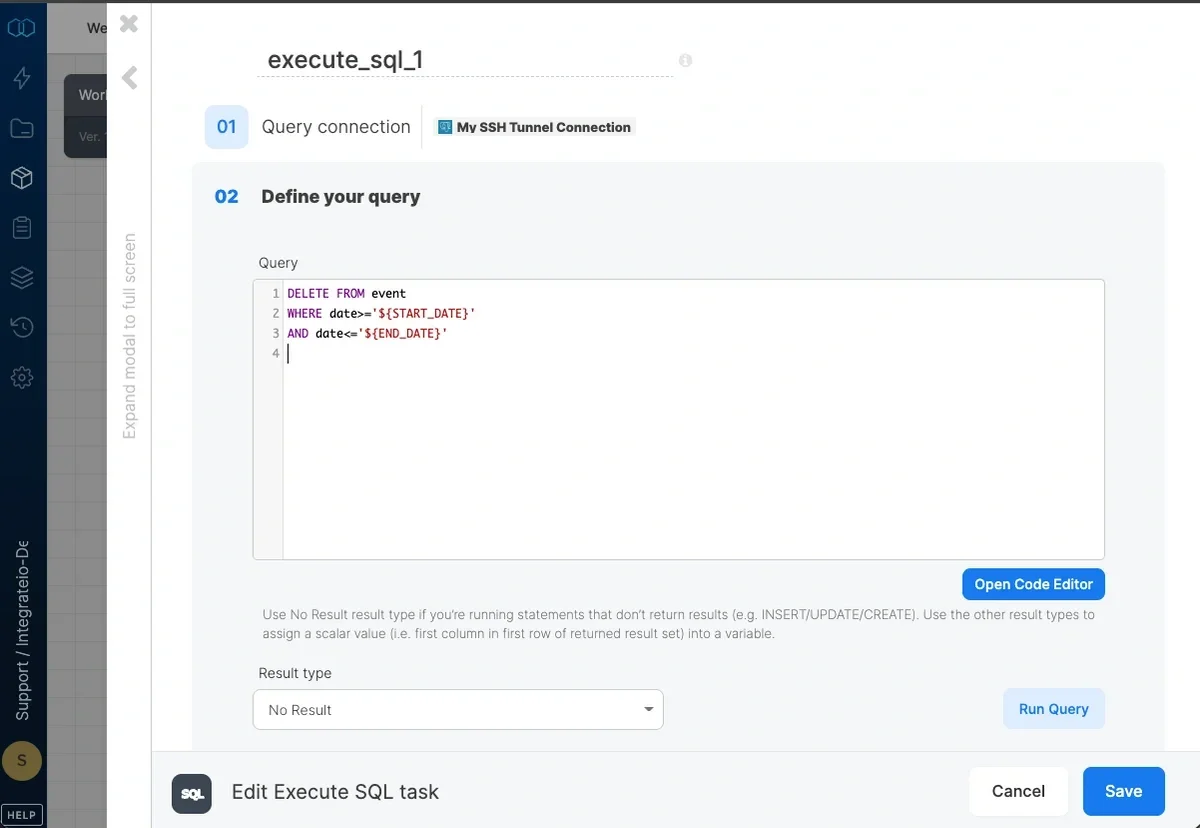

Execute SQL task

Write the SQL query that should be executed, and select the query result type from Result type dropdown. You can test the query by clicking Test Query.

Note:BigQuery connections uses standard SQL within Execute SQL task

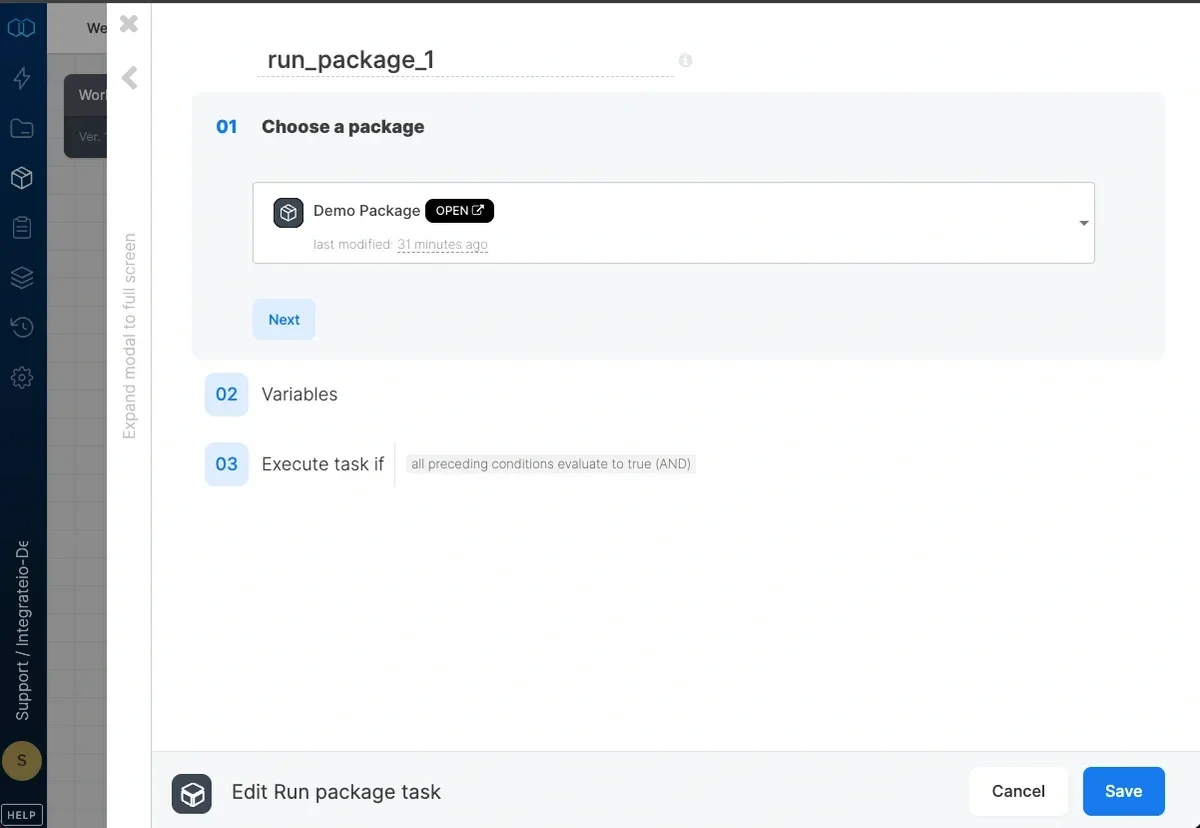

Run package task

Optionally, you may edit the dataflow variables if you want to override the values with workflow variables.

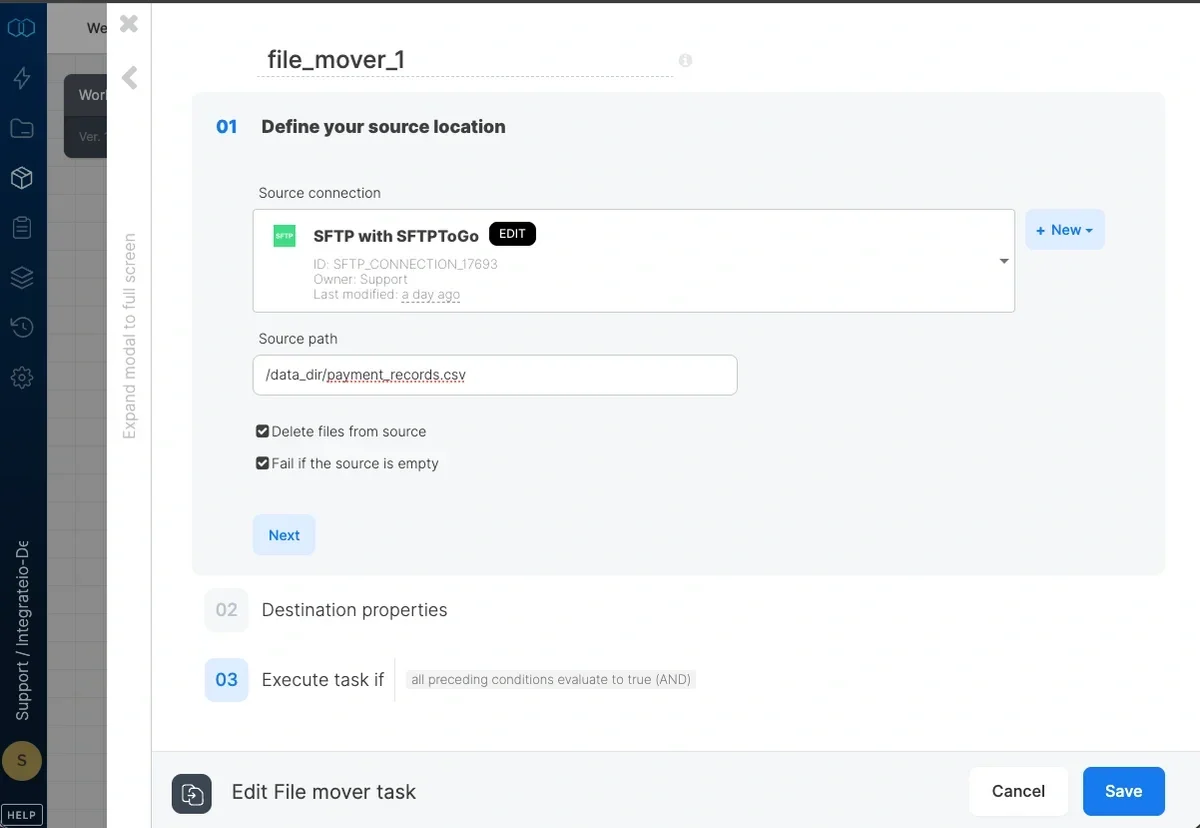

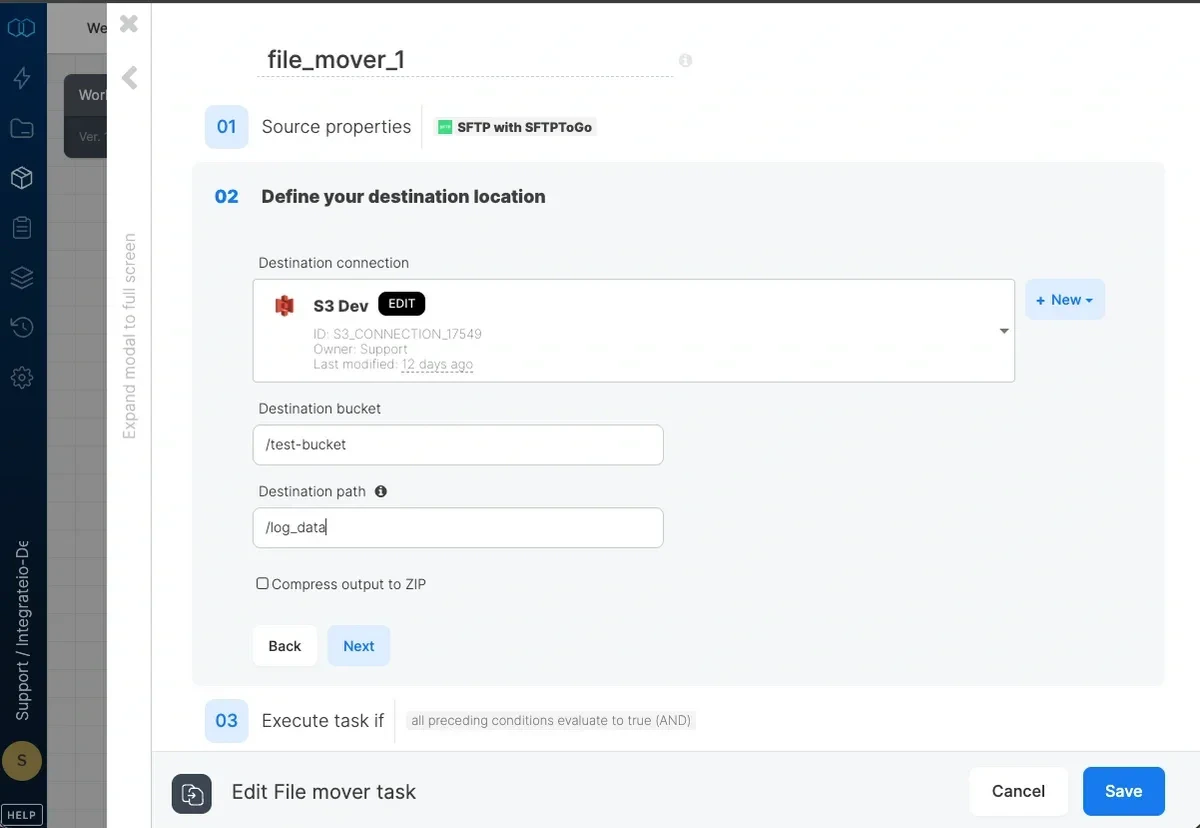

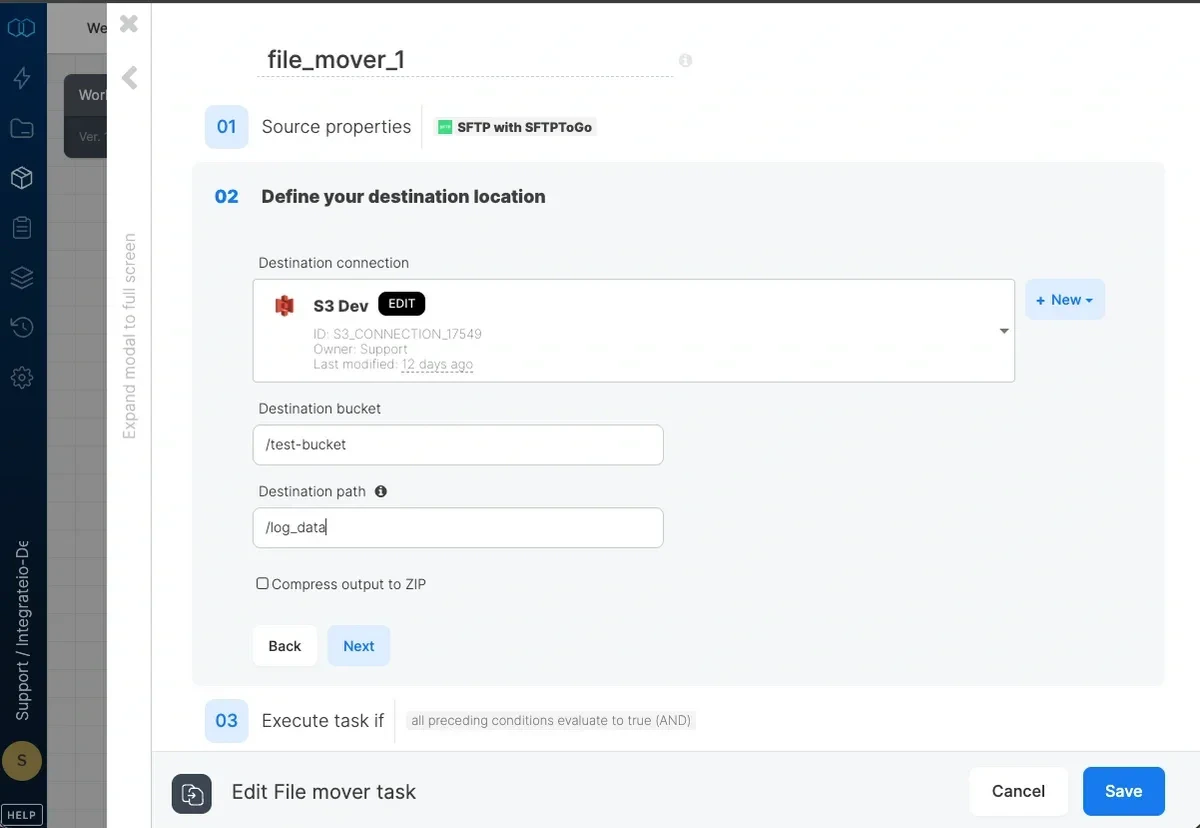

File mover task

You can enable following options on the source connection:

- Delete files from source

- Fail if the source is empty

Input destination bucket (if connection requires a bucket) as well as the destination path. Any directories/folders in the path must already exist. If you wish to change the name of the file, define the path all the way to the file name and extension - for example, directory_name/new_file_name.csv

Note:Package variable (`$`) and Wildcard file pattern (`*`) can be applied as bucket or path name.

Using Variables in Workflows

User variables can be defined at the workflow package level and can be used for both the Execute SQL Task and the Run Package Task.-

Execute SQL Task

- Variables can also be assigned values by the Execute SQL task. This is useful if you want to have dynamic values on your variable and use it later on.

- When using variables in a SQL query, enclose the variable within curly brackets (i.e:

'${var\_name}'). - Example of using a variable within the Execute SQL task query:

DELETE FROM event

WHEREdate>='${START\_DATE}'

ANDdate<='${END\_DATE}'

- When using variables in a SQL query, enclose the variable within curly brackets (i.e:

- Variables can also be assigned values by the Execute SQL task. This is useful if you want to have dynamic values on your variable and use it later on.

-

Run Package Task

-

Workflow package level variables can be used to override dataflow level variables. Here’s an example:

Note:Take note that we address package variables regularly as $variable_name which is different from the way we used it on Execute SQL Task above.

Note:Take note that we address package variables regularly as $variable_name which is different from the way we used it on Execute SQL Task above.- If both workflow and dataflow variables have the same name, you will still have to assign the workflow variable to the dataflow variable.

- If a task dataflow uses a variable that isn’t defined at the dataflow level but is assigned a value at the workflow level, the dataflow task will use the workflow variable value.

-

Workflow package level variables can be used to override dataflow level variables. Here’s an example: